rand thompson

Well-known member

- Joined

- Aug 9, 2011

- Messages

- 18,878

- Reaction score

- 608

- Points

- 113

RED KOMODO MONOCHROME w/ LEICA M 0.8 PRIME 50mm NOCTILUX 0.95 NIGHT TEST

BY Keith Morton

BY Keith Morton

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: this_feature_currently_requires_accessing_site_using_safari

Howdy Hill | Red Komodo Monochrome

by Moviesauce

Shot on Red Komodo Monochrome with Sigma Art 24-35mm

The video is of very poor quality. There is obnoxious banding.

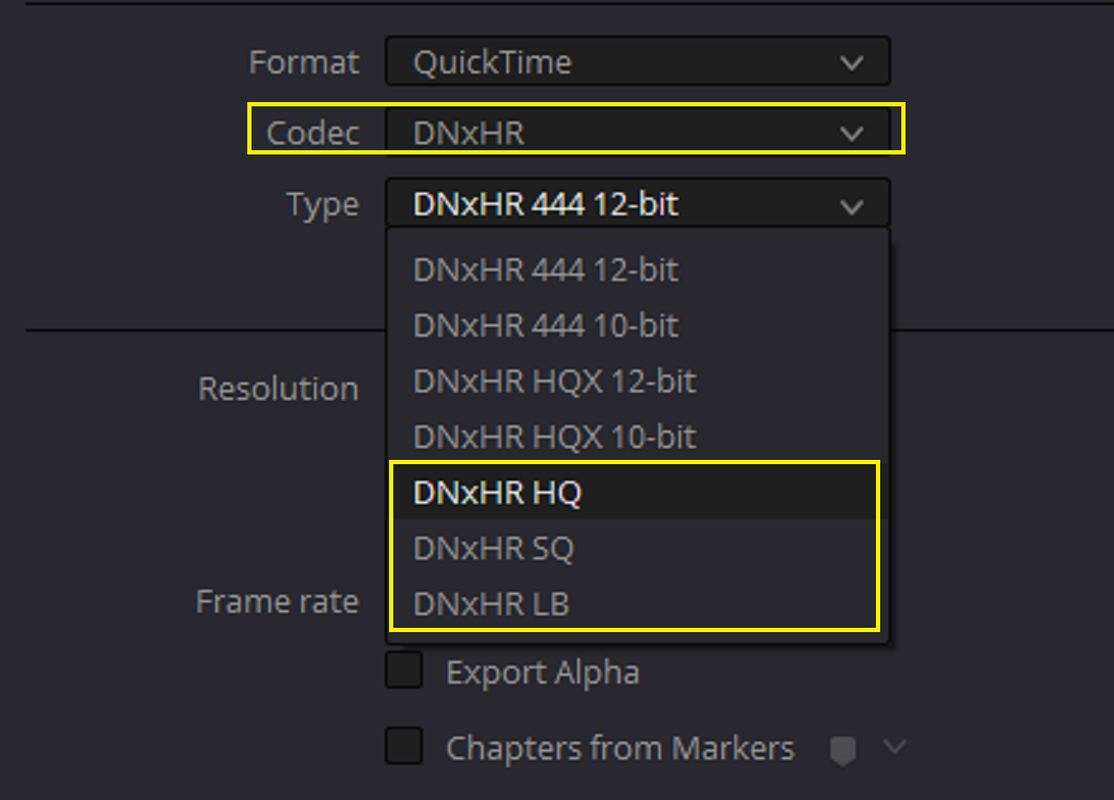

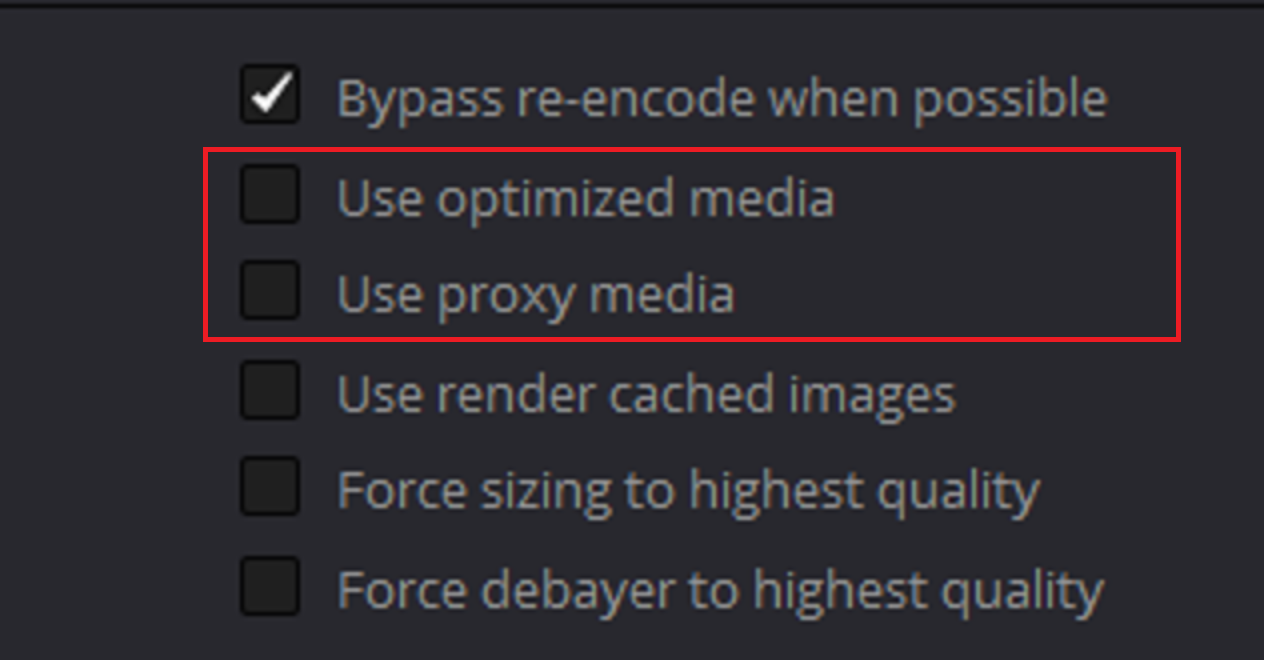

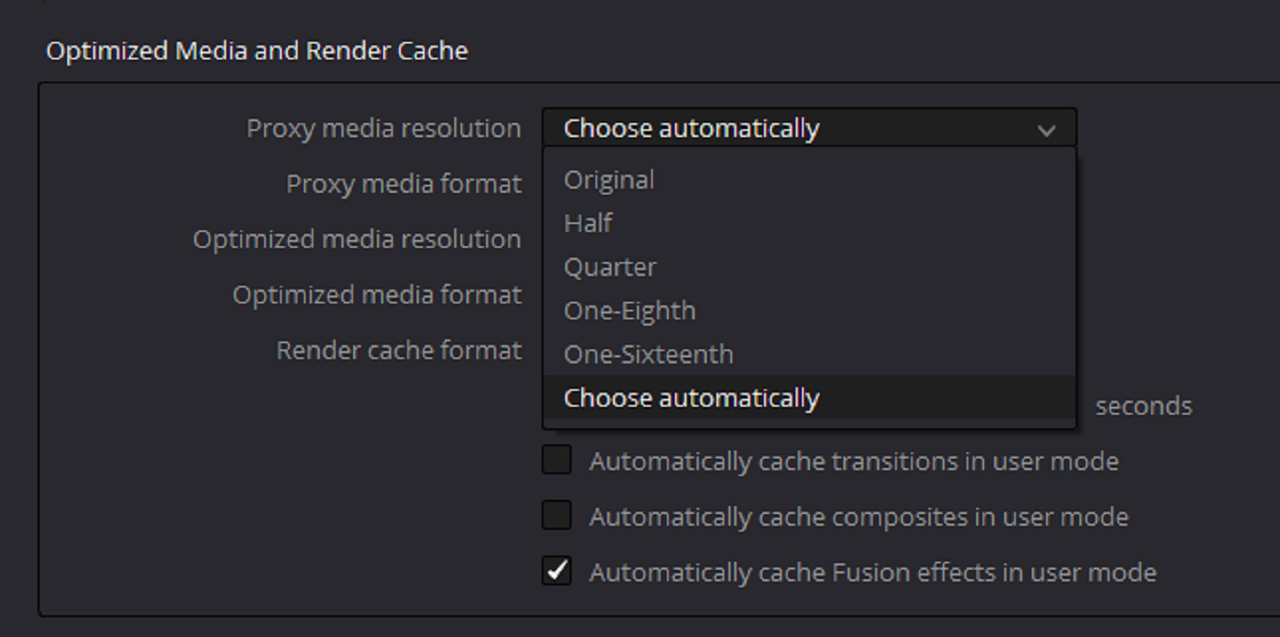

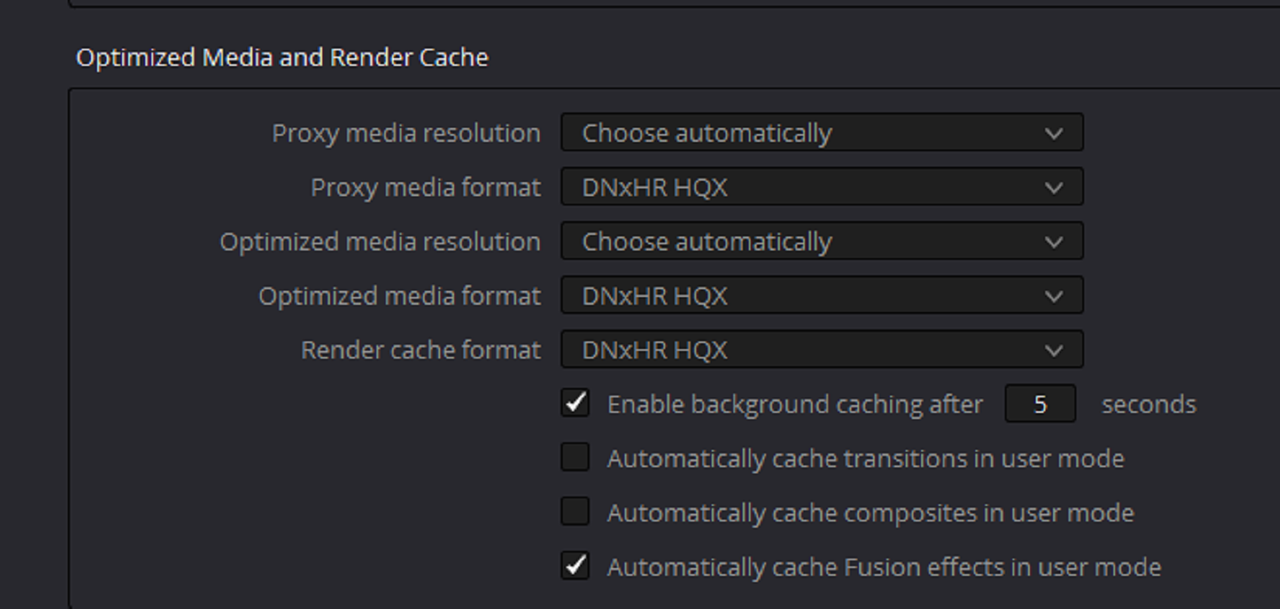

A byproduct of recompression/rencoding via YouTube and likely export settings.

Looks great. I just can’t see myself shooting monochromatic video. Mind you, I often convert my still images to B&W.

Looks great. I just can’t see myself shooting monochromatic video. Mind you, I often convert my still images to B&W.