Michel Hafner

Well-known member

- Joined

- May 21, 2007

- Messages

- 376

- Reaction score

- 0

- Points

- 0

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: this_feature_currently_requires_accessing_site_using_safari

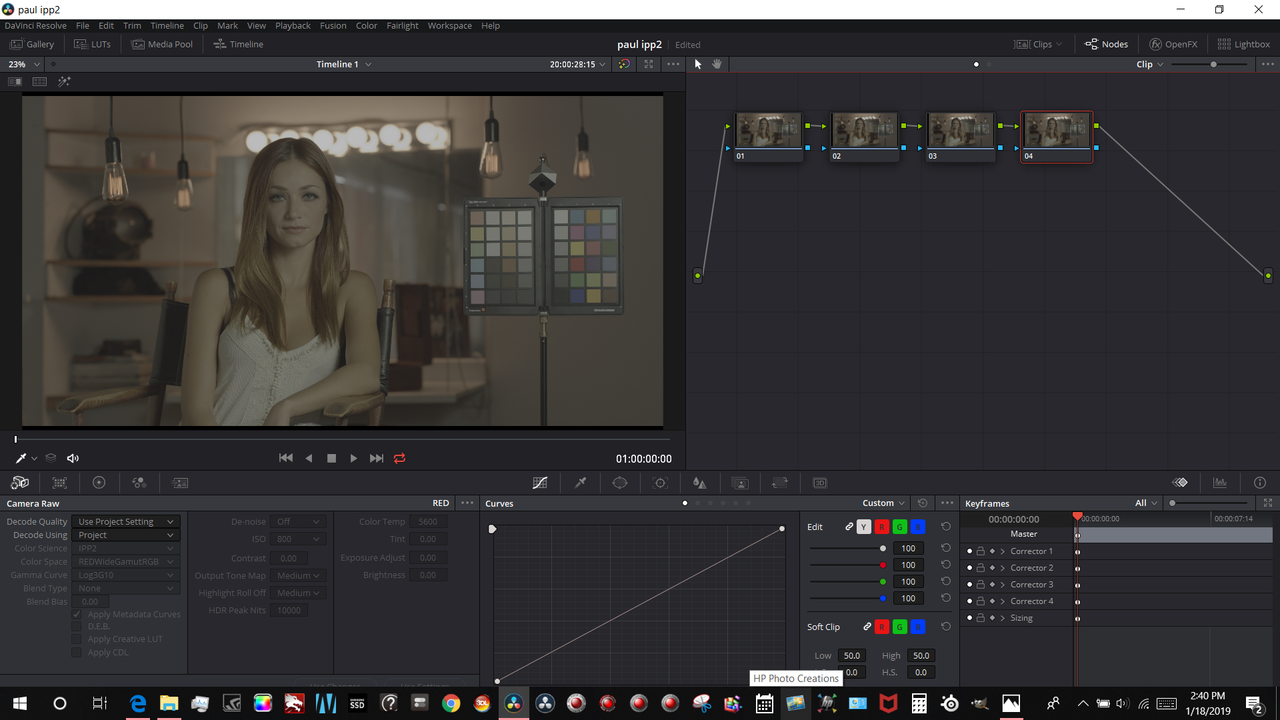

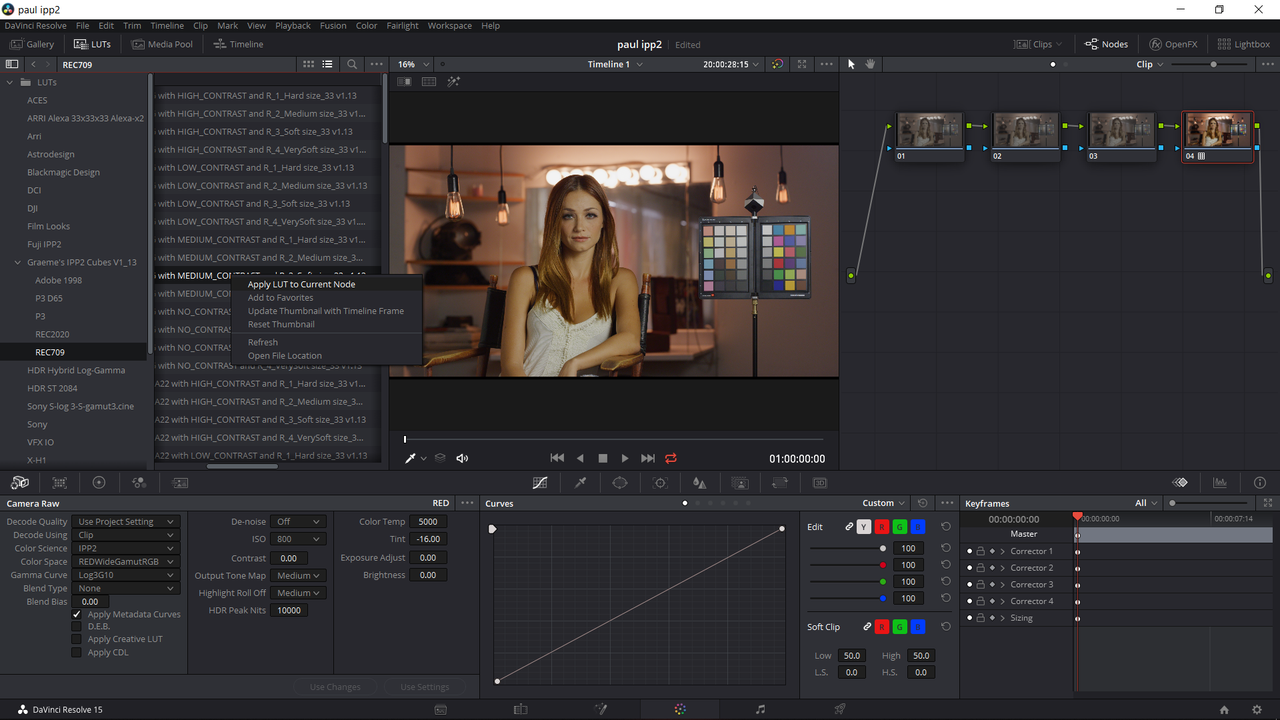

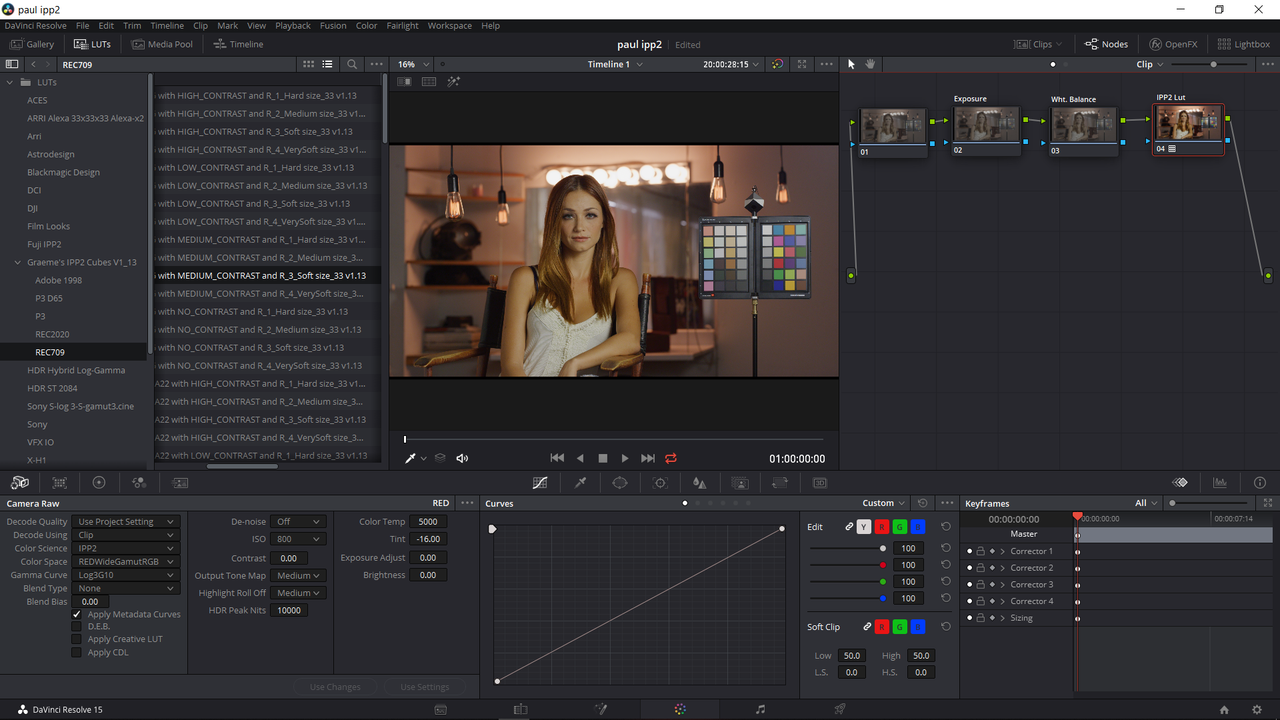

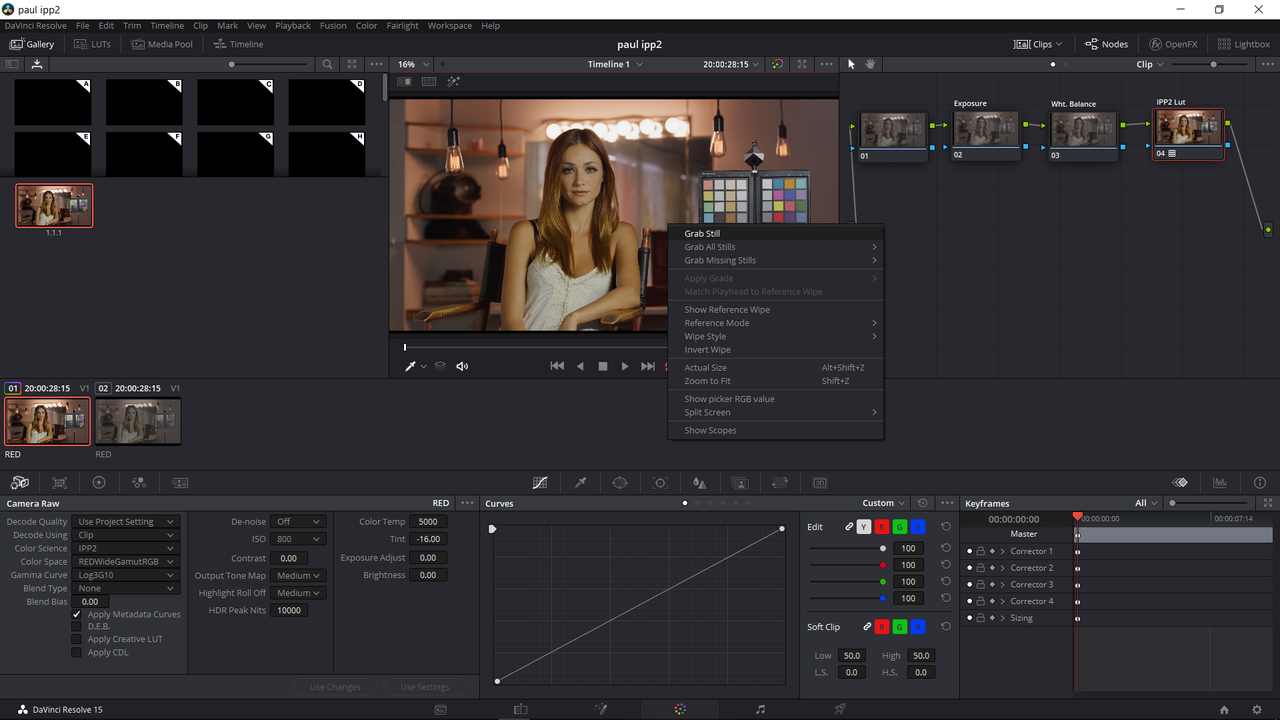

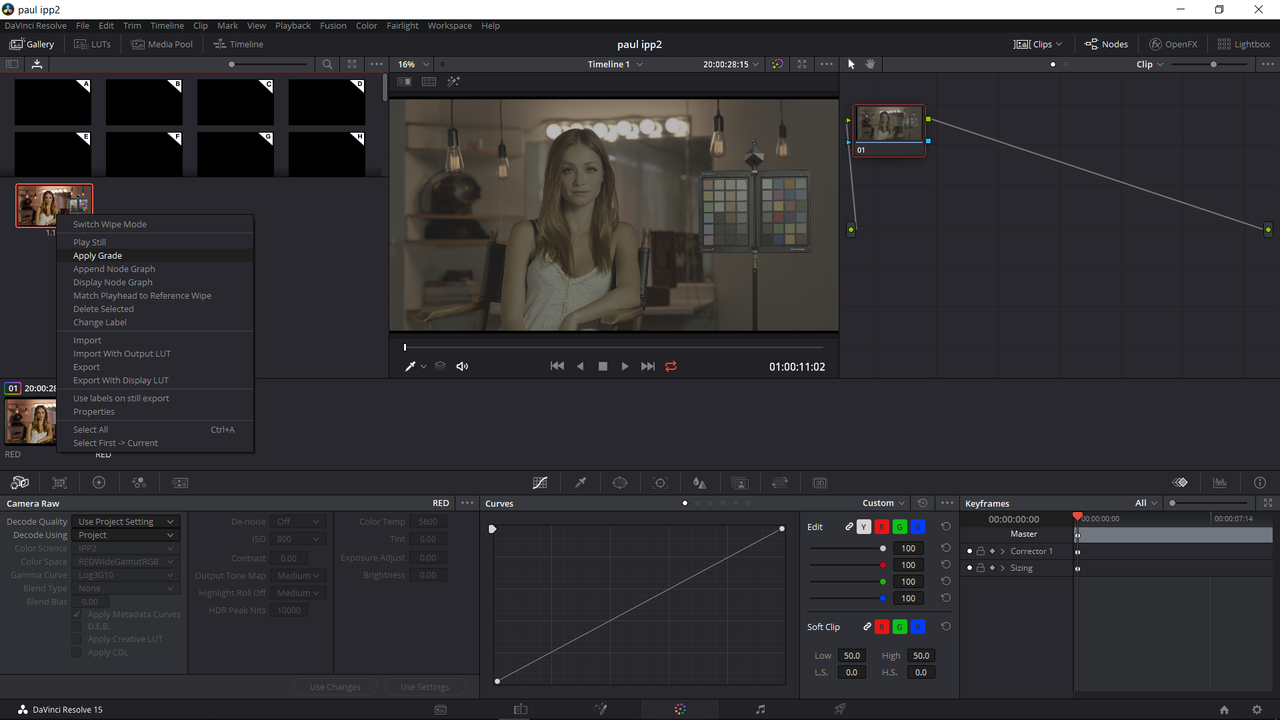

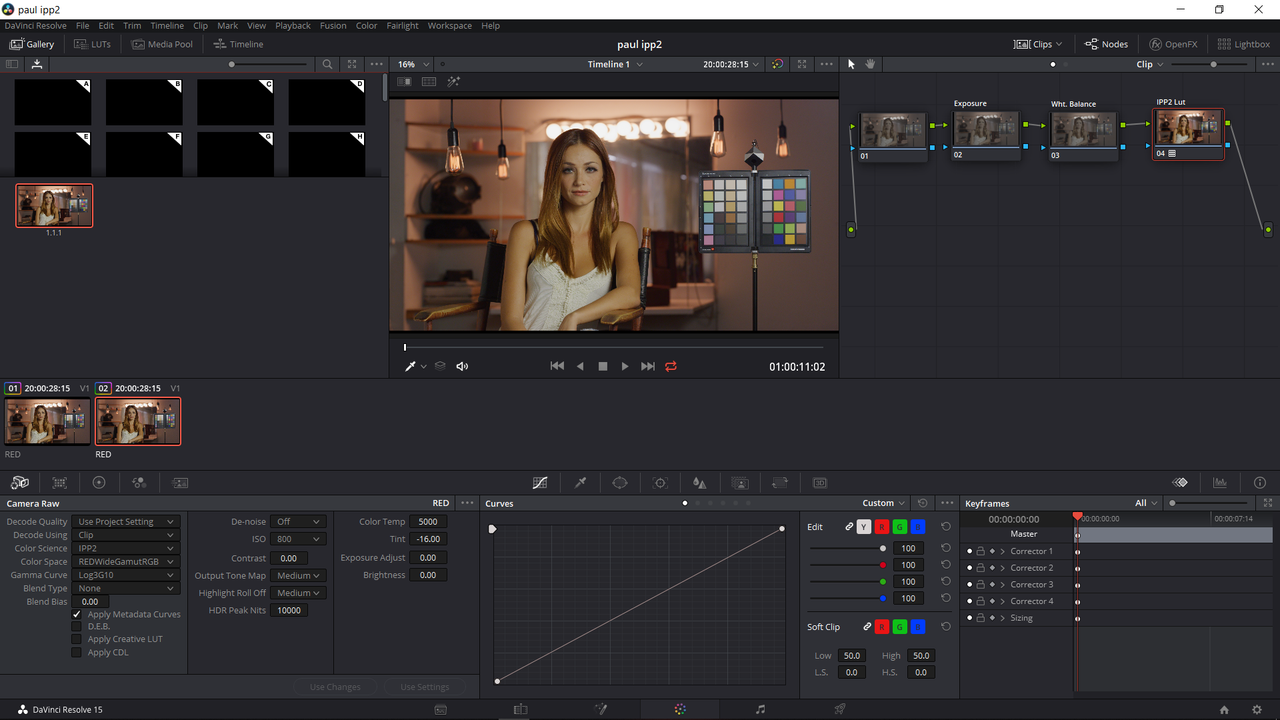

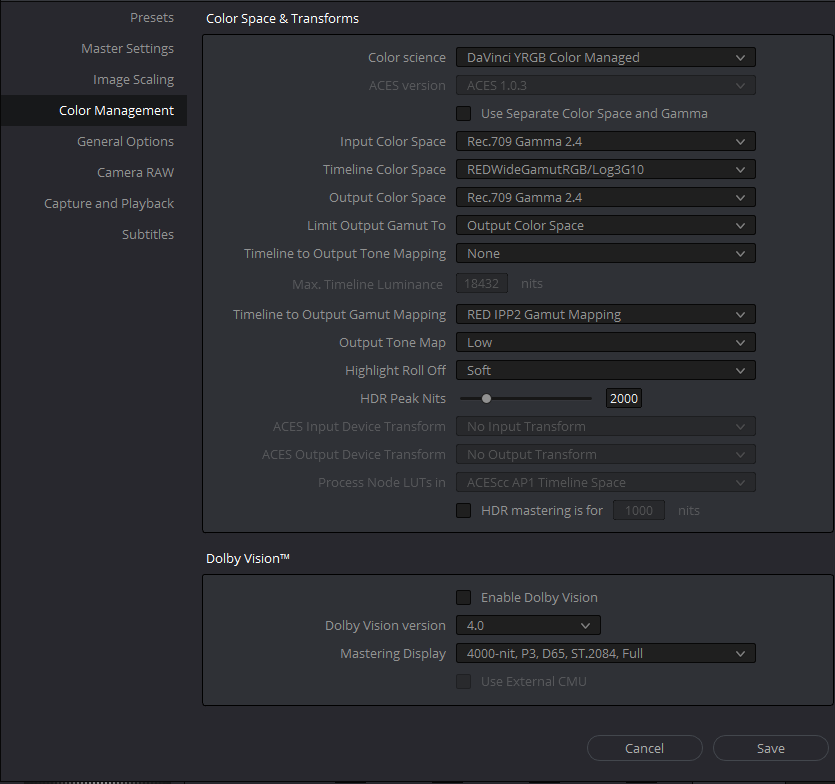

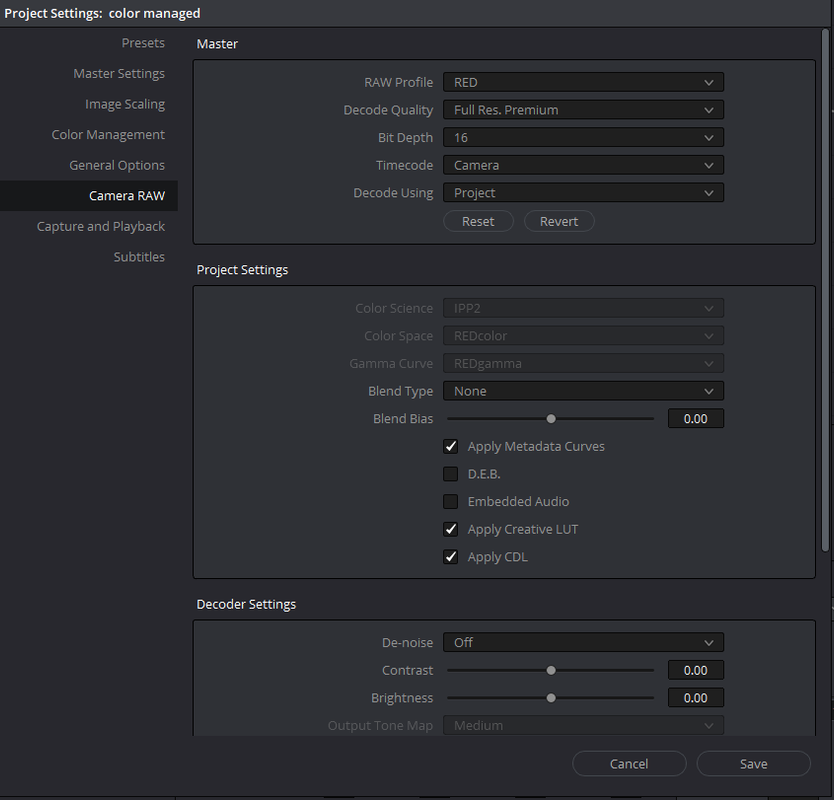

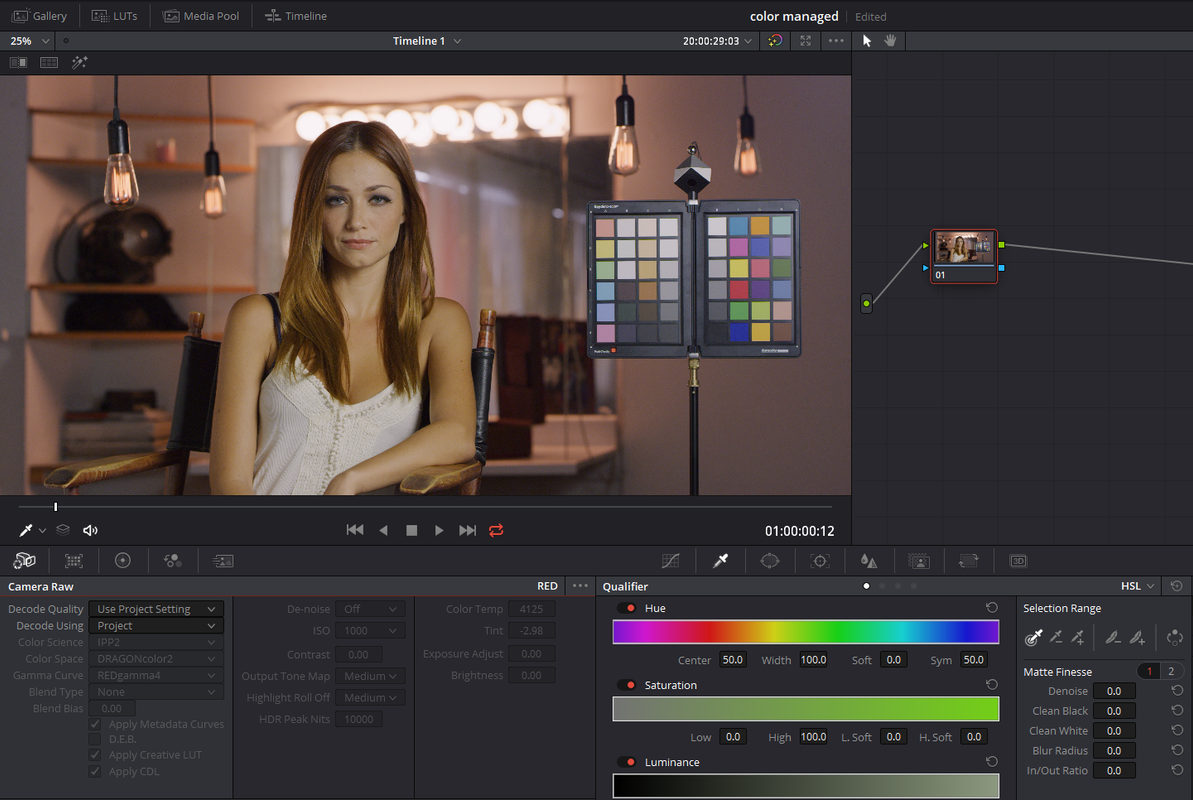

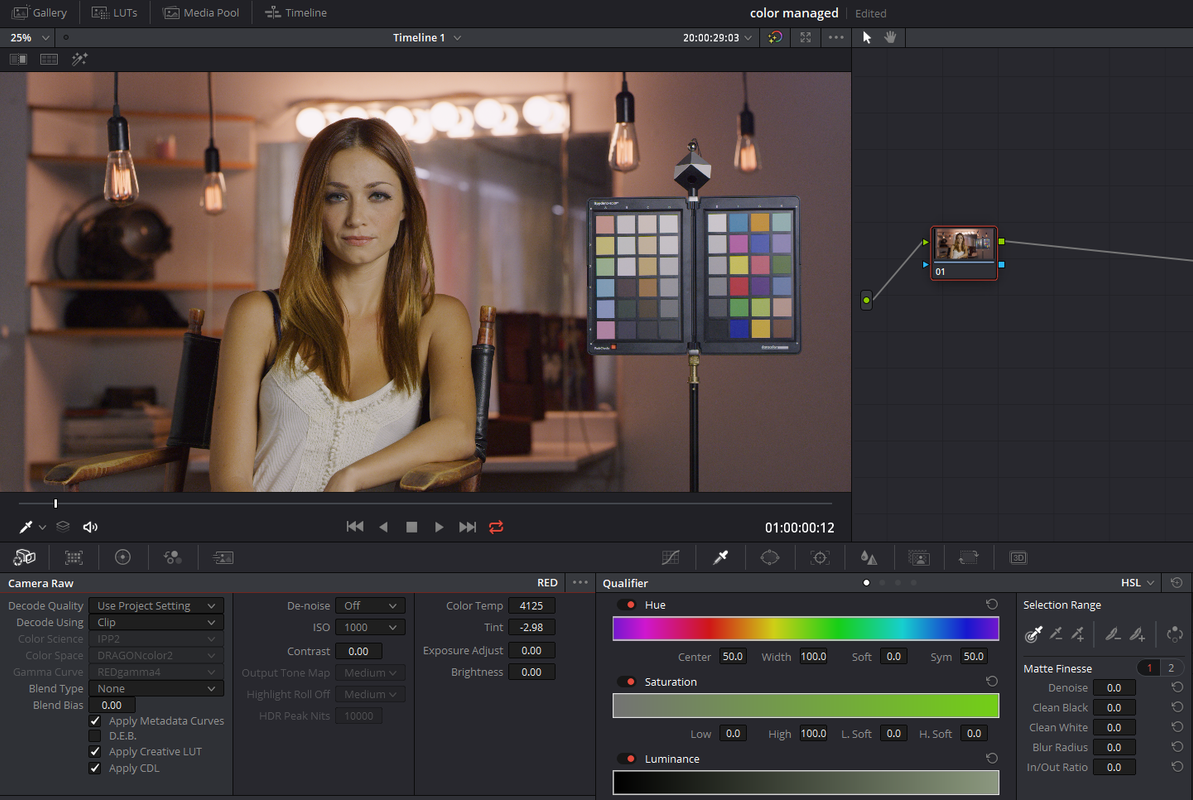

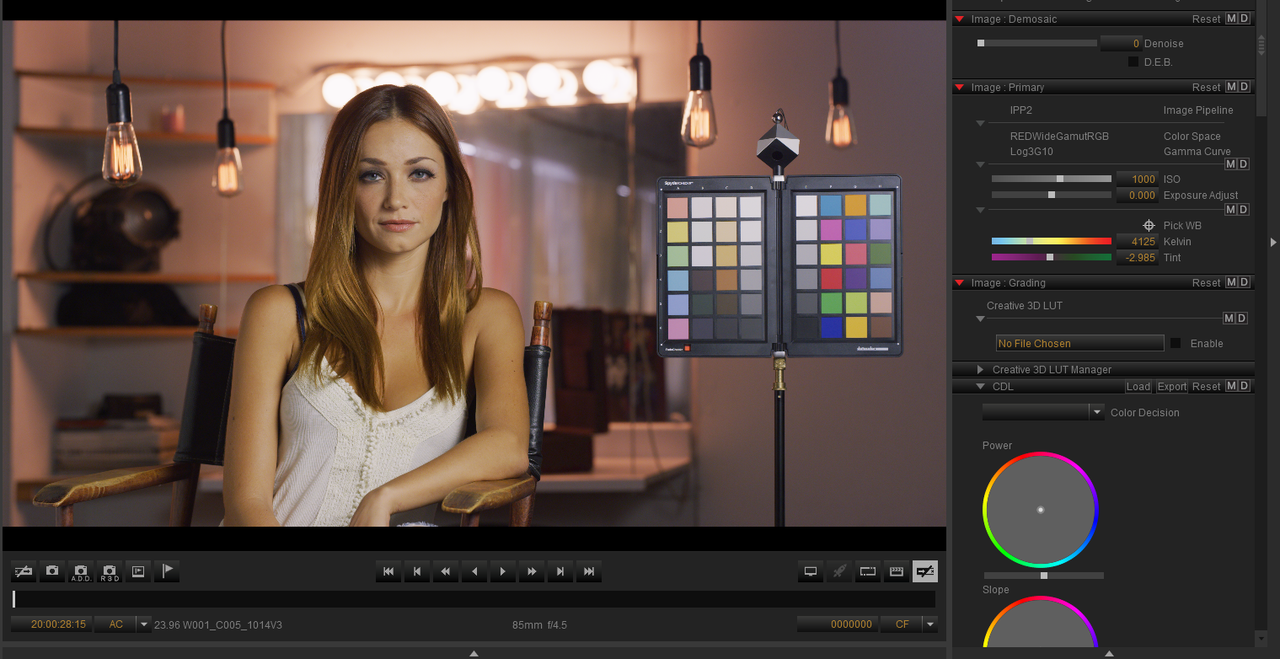

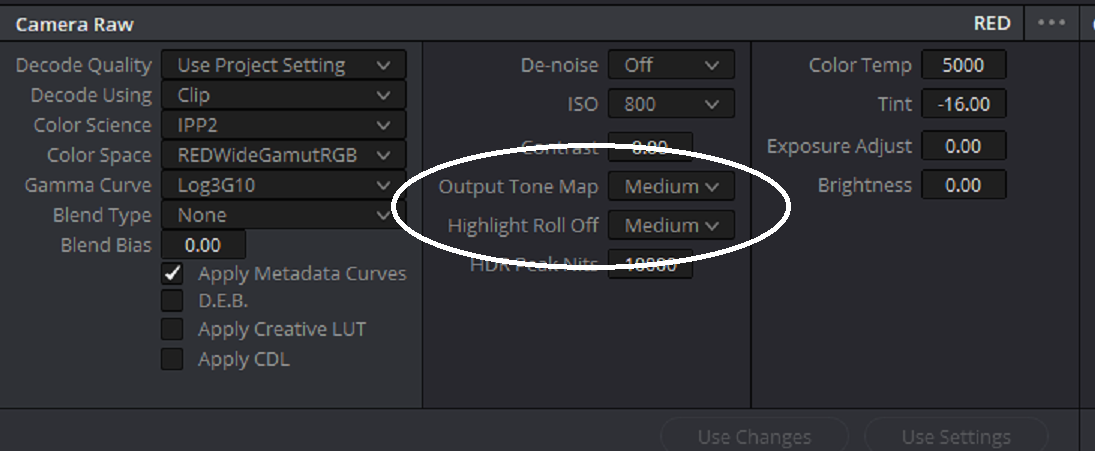

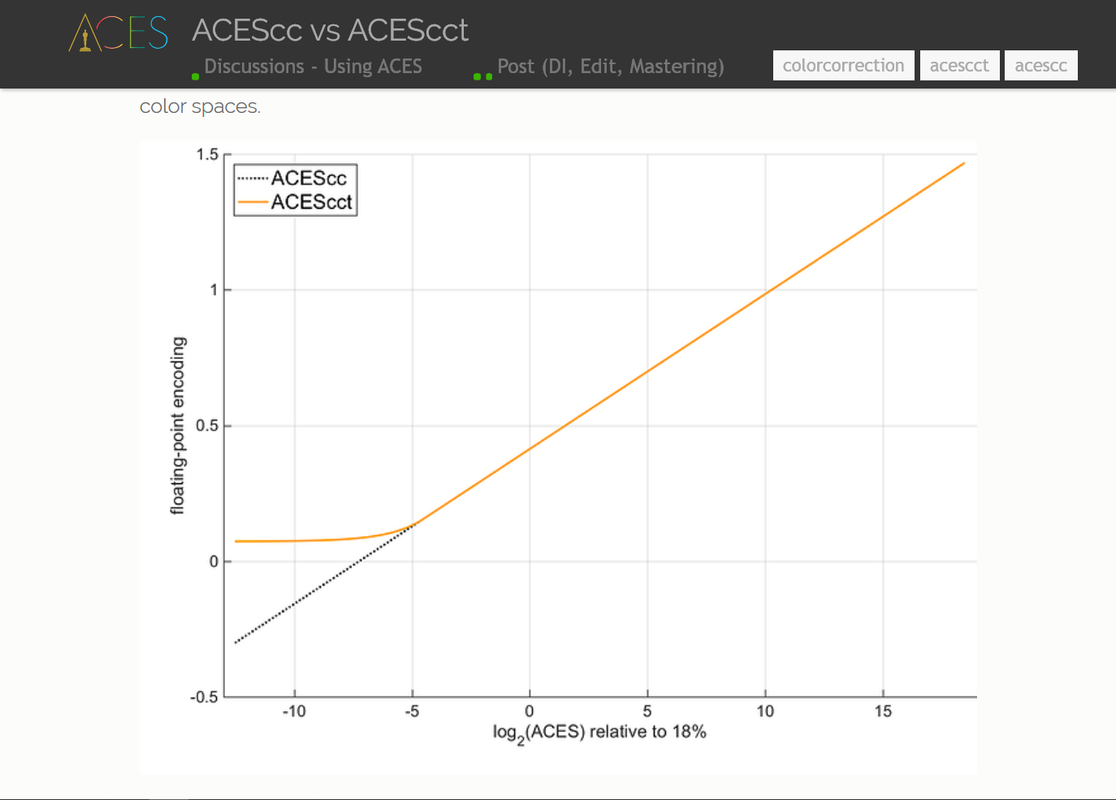

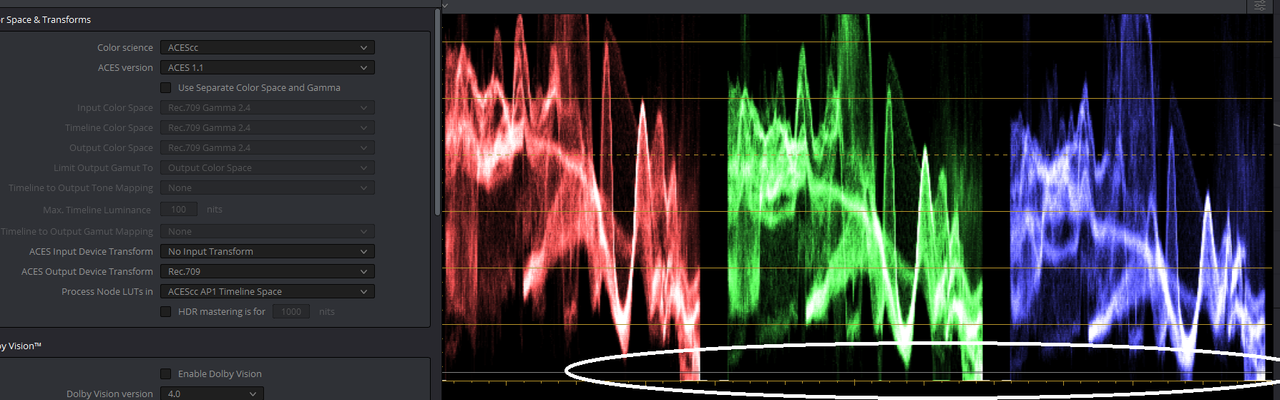

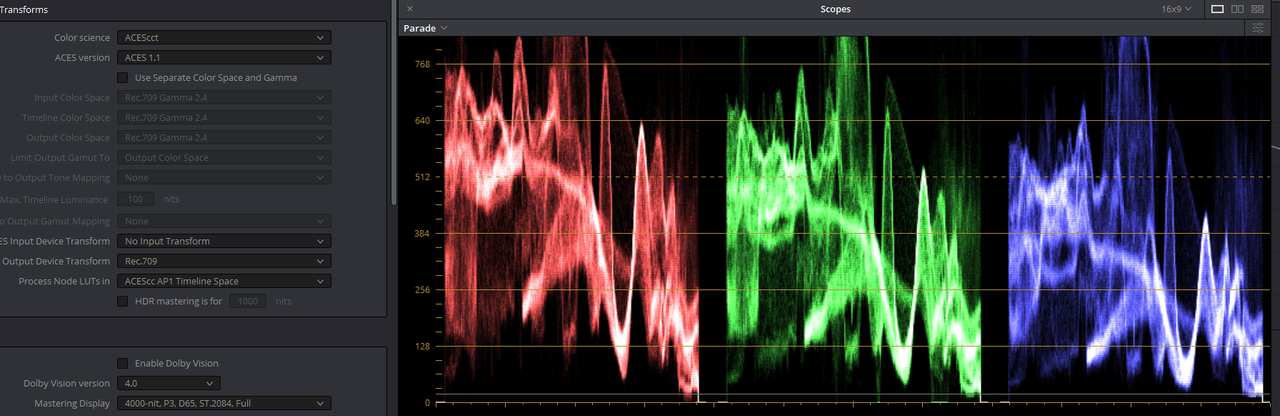

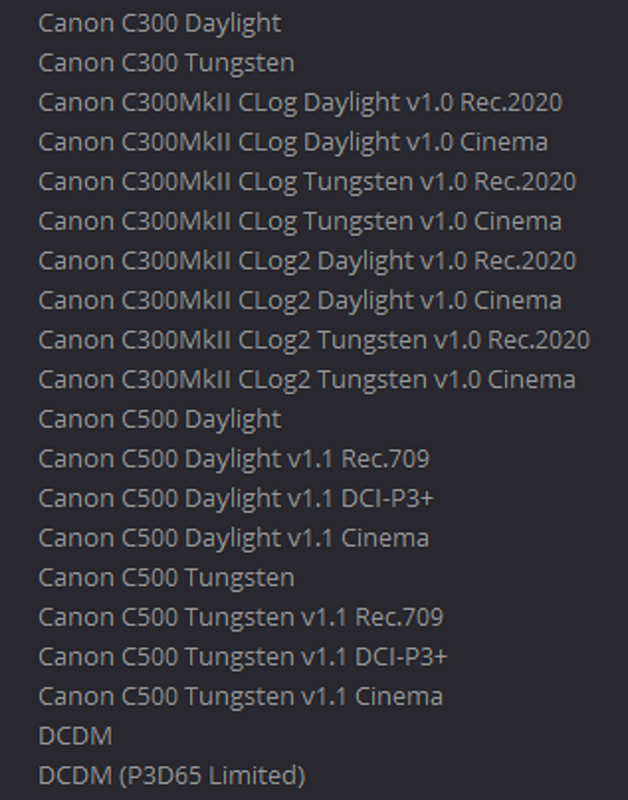

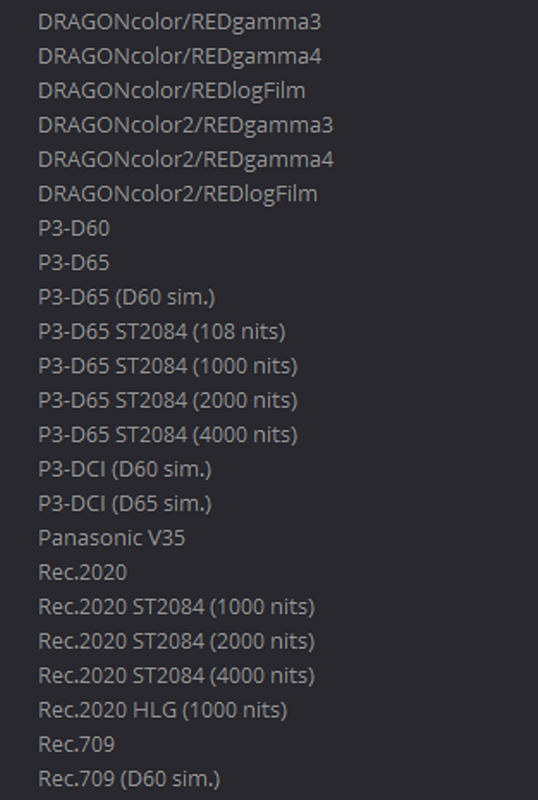

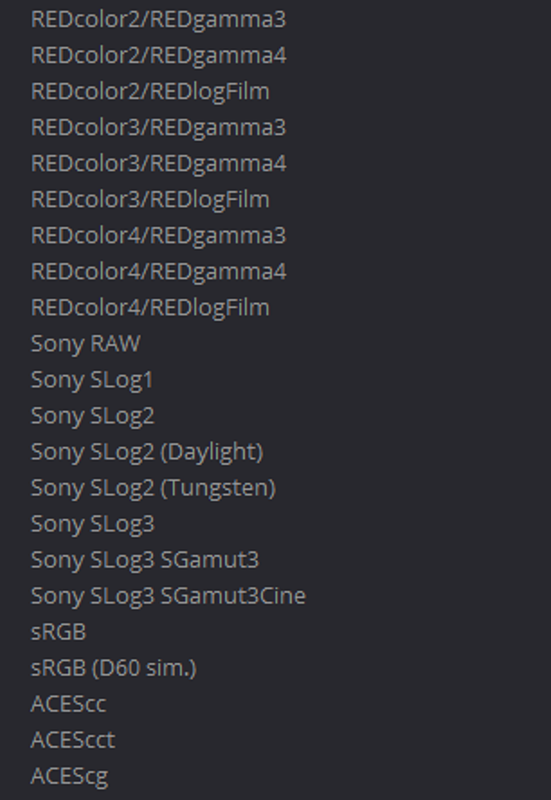

What are those curves showing?