- Moderator

- #61

- Joined

- Apr 19, 2007

- Messages

- 13,390

- Reaction score

- 793

- Points

- 113

- Location

- Los Angeles

- Website

- www.phfx.com

I'm enjoying watching this from afar, but one thing I can safely say as it pertains to digital cinema cameras that have very good dynamic range is often their promoted use as well as typical grades you aren't clipping or crushing at max values as part of the charm of those many stops is what you see as it pertains to highlight and shadow detail. Though occasionally grades go that route. Exploring the max range of any typical display gamma produces more video-like results.

The concept of pure black and white as it pertains to various gammas and display mediums is a broad topic. Especially diving deeper into film itself.

I don't know what DXL LUT is being used there, but their actual current gen stuff is Creative Cube IPP2 based. It does feature a slight tone map, so it's not purely a chroma solution.

That is not the magic bullet. There is no magic bullet. Just ideal or well aimed at targets, accuracy based concepts versus perceptual pleasing ones, and similar madness.

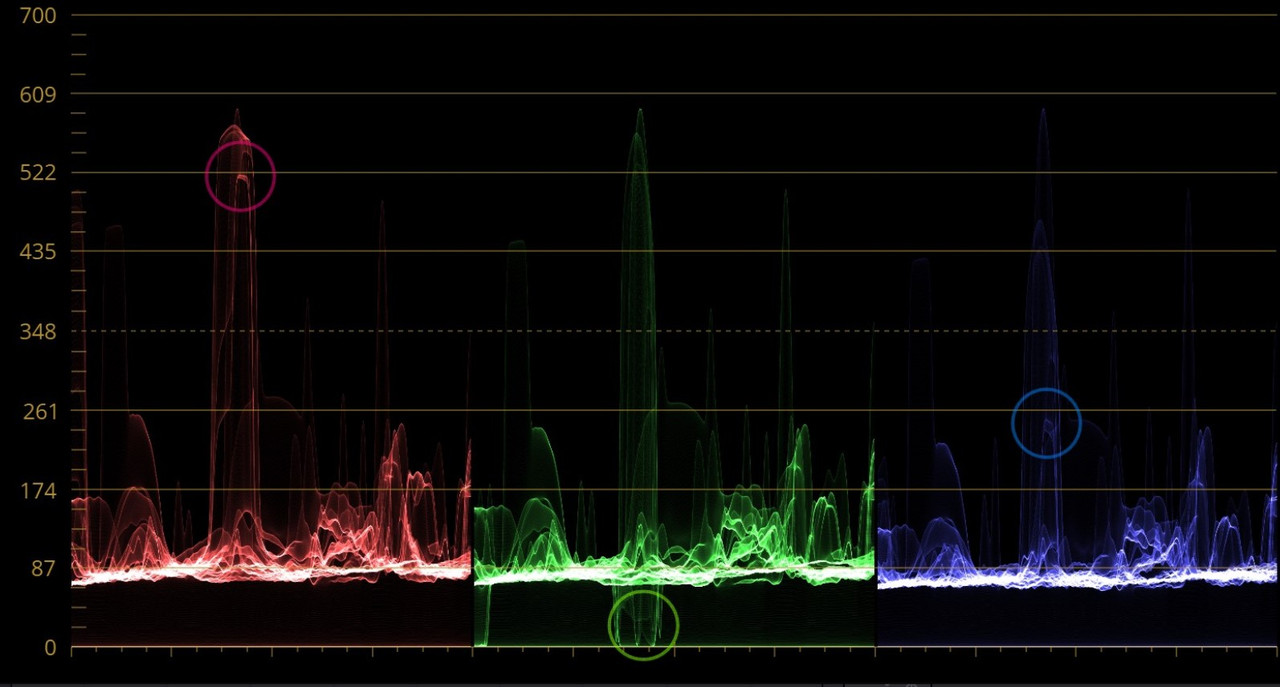

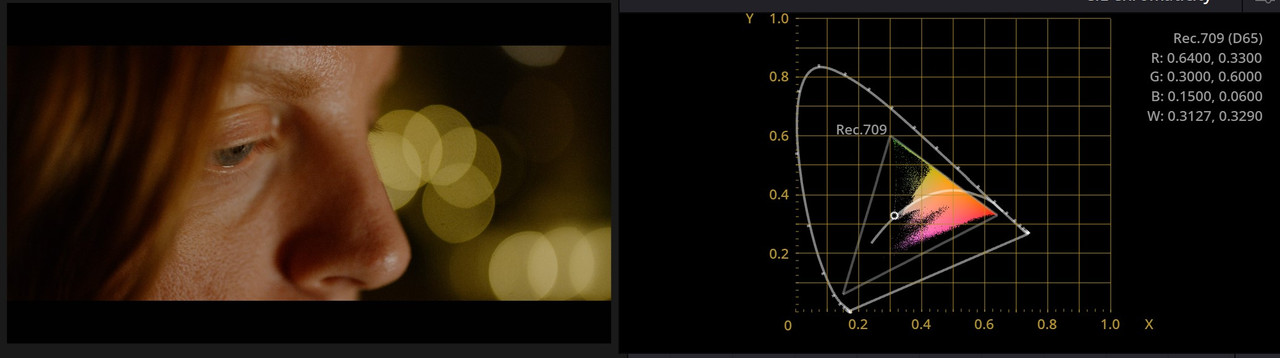

I don't have the R3D of the truck stoplights, but that's an example of where IPP2 high gamut tuning ideally should help make those colors a bit more pleasing, but it's a high or near edge of gamut color that in a typical grading situation would have some gamut limiting logic applied to it. That specific case is where all digital cinema cameras show a lot of chaos as some deal with those colors on a pure data captured basis better than others or vice versa.

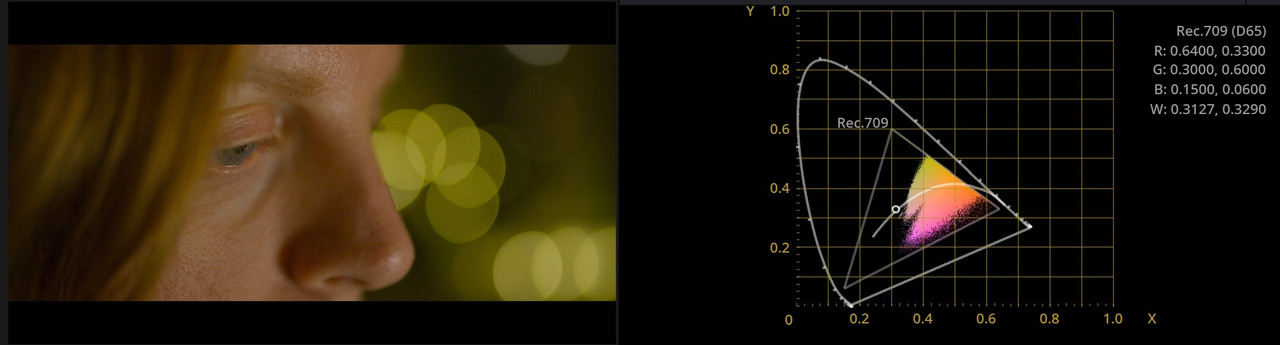

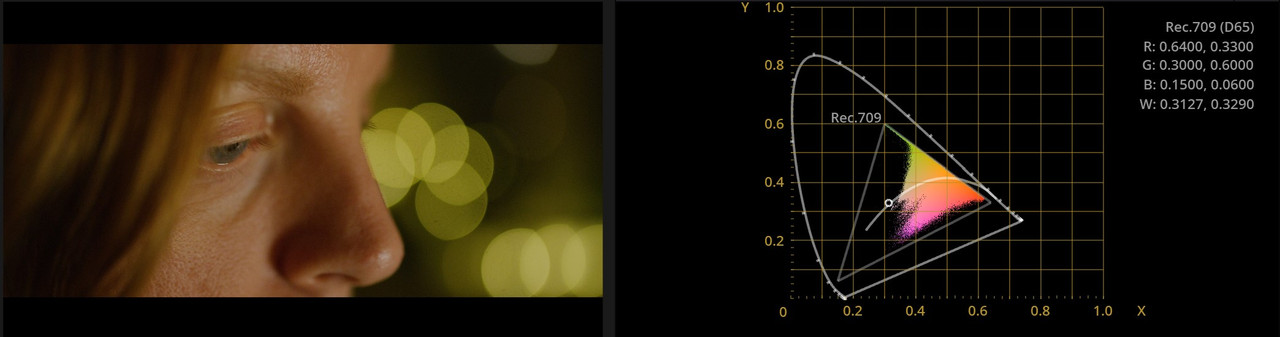

With the above white balance adjustment you are "cooling off" to create a more pleasing in gamut look. You can also drop those with some sort of gamut limiting concept within the 3200K grade.

The concept of pure black and white as it pertains to various gammas and display mediums is a broad topic. Especially diving deeper into film itself.

I don't know what DXL LUT is being used there, but their actual current gen stuff is Creative Cube IPP2 based. It does feature a slight tone map, so it's not purely a chroma solution.

That is not the magic bullet. There is no magic bullet. Just ideal or well aimed at targets, accuracy based concepts versus perceptual pleasing ones, and similar madness.

I don't have the R3D of the truck stoplights, but that's an example of where IPP2 high gamut tuning ideally should help make those colors a bit more pleasing, but it's a high or near edge of gamut color that in a typical grading situation would have some gamut limiting logic applied to it. That specific case is where all digital cinema cameras show a lot of chaos as some deal with those colors on a pure data captured basis better than others or vice versa.

With the above white balance adjustment you are "cooling off" to create a more pleasing in gamut look. You can also drop those with some sort of gamut limiting concept within the 3200K grade.

Last edited: