- Moderator

- #21

- Joined

- Apr 19, 2007

- Messages

- 13,390

- Reaction score

- 793

- Points

- 113

- Location

- Los Angeles

- Website

- www.phfx.com

Since usable is a fun term that has no basis on reality. And since there's only a handful of people who have defined a usable stop as a patch that is exposed clearly, measured when read, and contains a specific amount of captured detail rather than noise the best way to MEASURE it is through measuring it.

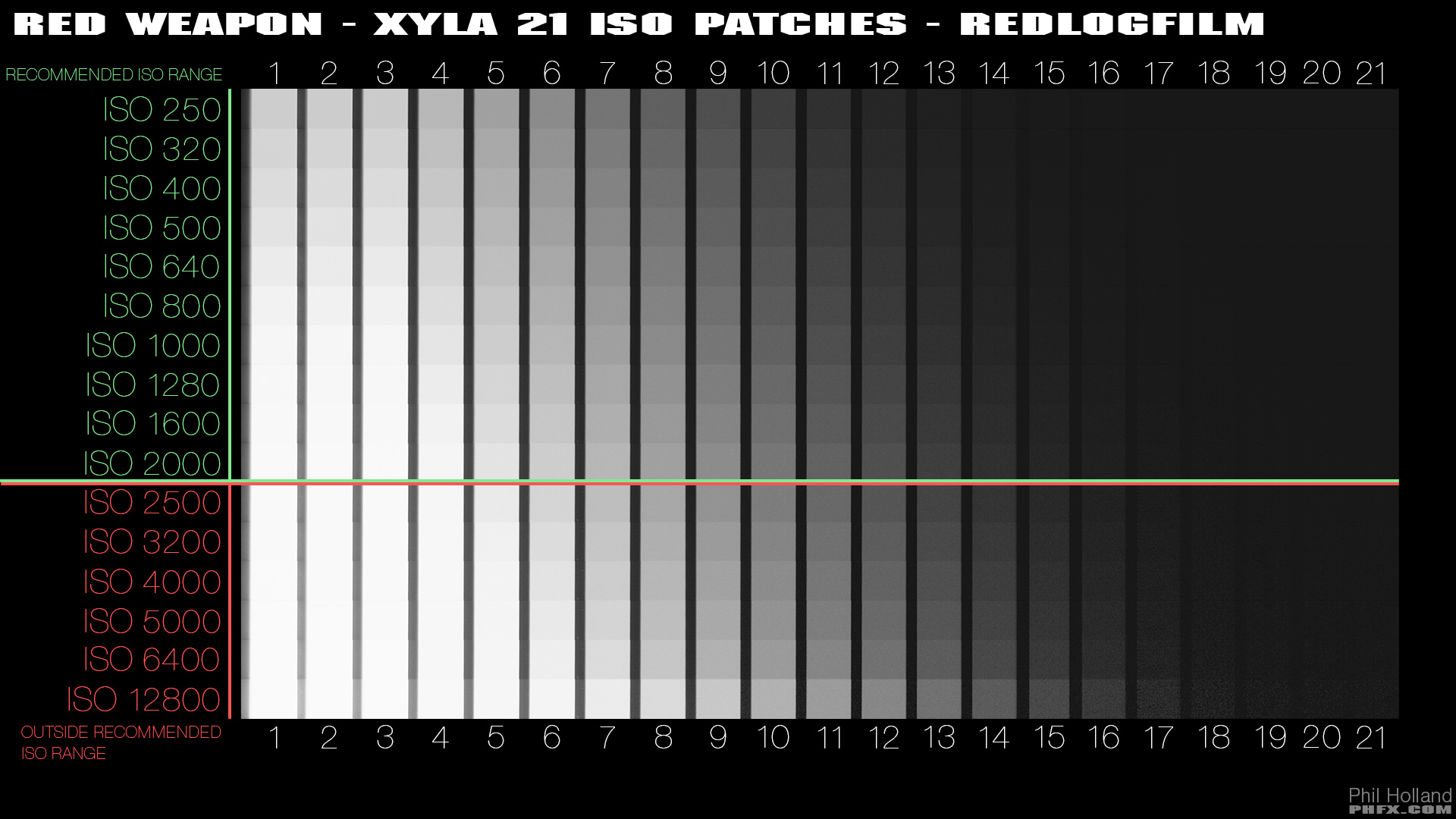

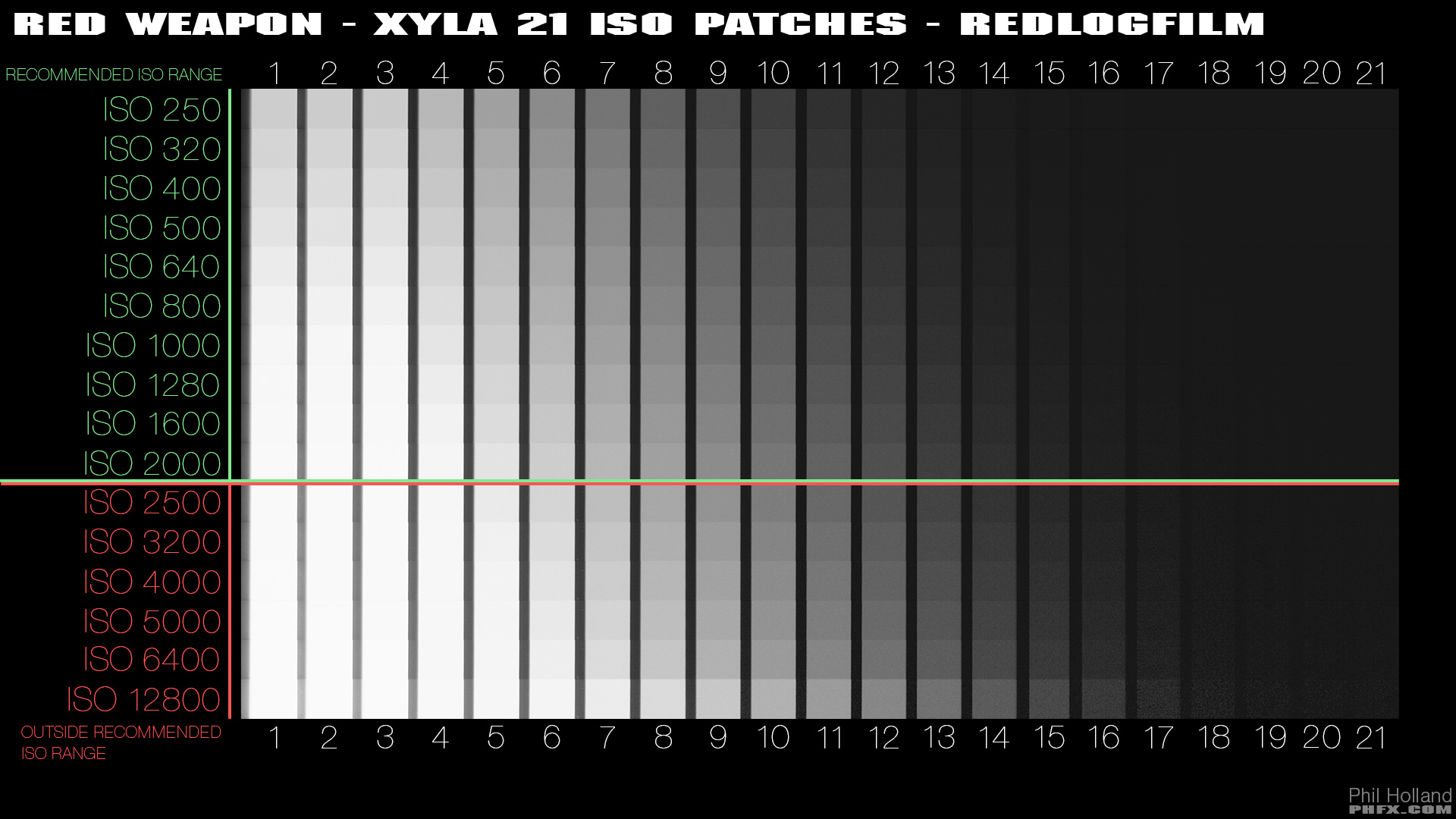

So for instance, with RED Weapon Dragon 6K through REDlogFilm using the Skin Tone - Highlight OLPF (this does make a difference, especially in highlights/clipping and noise floor texture/noise/grain) here's what you get:

A few core concepts to discuss from there. RED Dragon has always been a recommended ISO Range of ISO 250-2000. With the Low Light Optimized OLPF you can certain stretch that further into the higher ISO ratings beyond that.

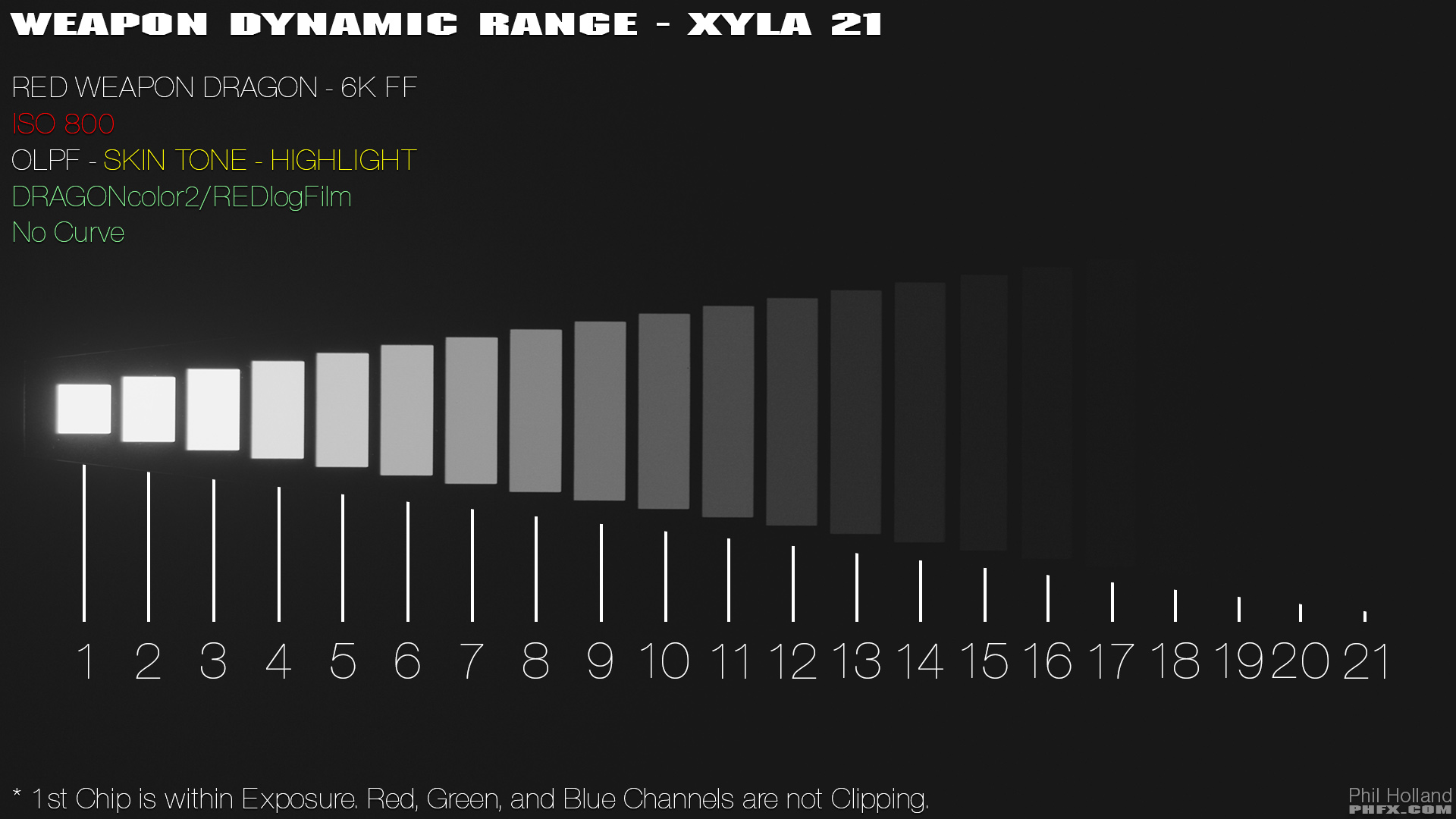

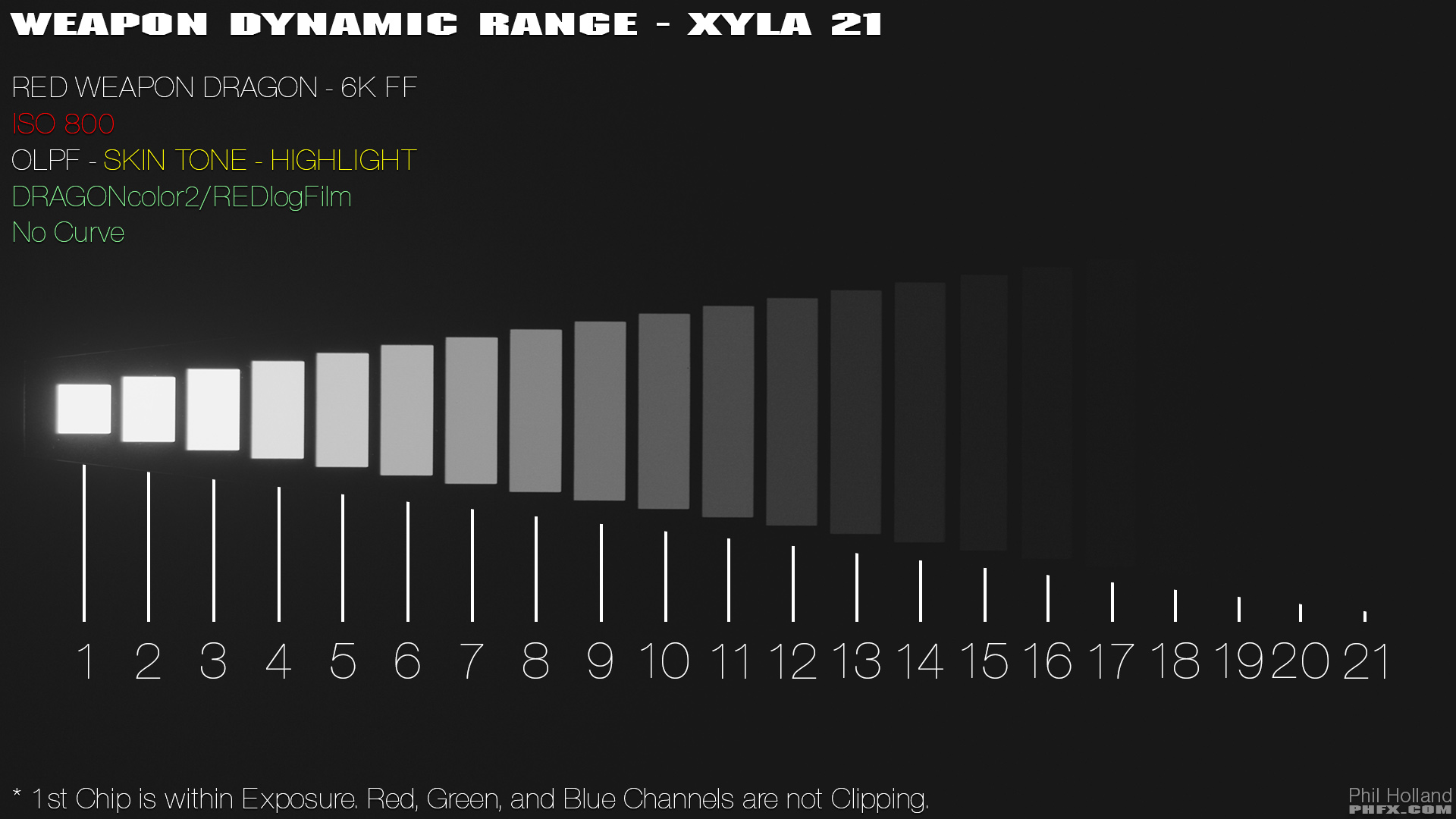

In a typical real world scenario you have access to 16+ stops if you utilized the recommended Base ISO of 800.

How many patches can you "see" here:

Remember the Base ISO is typically the approximate area where you have equal stops above and below Middle Gray.

So generally speaking:

Now OLPF selection does indeed play a role here. That chart above is for the Skin Tone - Highlight OLPF, which does a great job of holding onto highlights in general. If rating the other OLPFs I'm more in the ISO 1000-1280 for the Standard OLPF and ISO 1280-1600 for the Low Light Optimized OLPF.

Here's a rather deep look and test at Dragon's Dynamic Range:

http://www.reduser.net/forum/showthread.php?137883-RED-Weapon-Dynamic-Range-and-Latitude-Examined

So for instance, with RED Weapon Dragon 6K through REDlogFilm using the Skin Tone - Highlight OLPF (this does make a difference, especially in highlights/clipping and noise floor texture/noise/grain) here's what you get:

A few core concepts to discuss from there. RED Dragon has always been a recommended ISO Range of ISO 250-2000. With the Low Light Optimized OLPF you can certain stretch that further into the higher ISO ratings beyond that.

In a typical real world scenario you have access to 16+ stops if you utilized the recommended Base ISO of 800.

How many patches can you "see" here:

Remember the Base ISO is typically the approximate area where you have equal stops above and below Middle Gray.

So generally speaking:

Now OLPF selection does indeed play a role here. That chart above is for the Skin Tone - Highlight OLPF, which does a great job of holding onto highlights in general. If rating the other OLPFs I'm more in the ISO 1000-1280 for the Standard OLPF and ISO 1280-1600 for the Low Light Optimized OLPF.

Here's a rather deep look and test at Dragon's Dynamic Range:

http://www.reduser.net/forum/showthread.php?137883-RED-Weapon-Dynamic-Range-and-Latitude-Examined