David Mullen ASC

Moderator

Hi David

What are some of your favorite black-and-white films from the '40s and '50s that used filtration for what's commonly called "soft focus"? And what filters would you use to achieve that today, to retain as much contrast as possible?

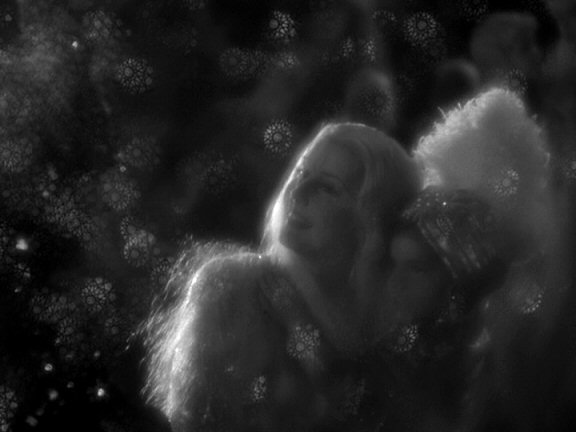

One of the most bizarre and fun movies for diffusion effects is "Midsummer Night's Dream" (1935), shot by Hal Mohr:

He did all sorts of strange things to soften the image, including hanging a scrim in front of the lens with sequins and glitter or something like that.

Back then, you had all sorts of diffusers, many homemade. Nets were probably the most common diffusion filter, but you also had Mitchells, Dutos, sort of frost filters, etc. You can still find many of these filters.

The Schneider Classic Soft is a good example of a modern filter commonly used based on old concepts.

They all lower contrast to some extent due to halation around bright areas; if you want subtle diffusing with no flare or contrast loss, then try Tiffen's Black Diffusion-FX. But you won't get the beautiful halation.

Modern designs like Diffusion-FX, Soft-FX, Classic Softs, etc. solve certain problems that older filters had, which is that they seem to throw the entire image slightly out of focus. I find that's a problem with Mitchells for example. True diffusion should be the overlay of a soft image over a sharp image, so the filter needs to allow some light rays to pass through unsoftened, hence the clear gaps in the pattern.

By the 1940's, sharper b&w photography was the trend and diffusion was used more sparingly, just on close-ups mainly. Other than exceptions like the fog-filtered scenes in "Vertigo".

You can see some jarringly-diffused shots in "Spartacus" -- like in the scene where Jean Simmons pours Kirk Douglas some water in the gladiator school -- this is razor-sharp Technirama (8-perf 35mm anamorphic) photography... Douglas is lit with hard light to make him look as rugged as possible, but she's shot through a net which makes her close-ups not match any of the surrounding shots.